Latent Wavelet

Diffusion

For Ultra-High-Resolution Image Synthesis

1Sapienza University of Rome · 2Singapore Management University · 3EMBL

For Ultra-High-Resolution Image Synthesis

1Sapienza University of Rome · 2Singapore Management University · 3EMBL

High-resolution image synthesis remains a core challenge in generative modeling, particularly in balancing computational efficiency with the preservation of fine-grained visual detail. We present Latent Wavelet Diffusion (LWD), a lightweight training framework that significantly improves detail and texture fidelity in ultra-high-resolution (2K-4K) image synthesis. LWD introduces a novel, frequency-aware masking strategy derived from wavelet energy maps, which dynamically focuses the training process on detail-rich regions of the latent space. This is complemented by a scale-consistent VAE objective to ensure high spectral fidelity. The primary advantage of our approach is its efficiency: LWD requires no architectural modifications and adds zero additional cost during inference, making it a practical solution for scaling existing models. Across multiple strong baselines, LWD consistently improves perceptual quality and FID scores, demonstrating the power of signal-driven supervision as a principled and efficient path toward high-resolution generative modeling.

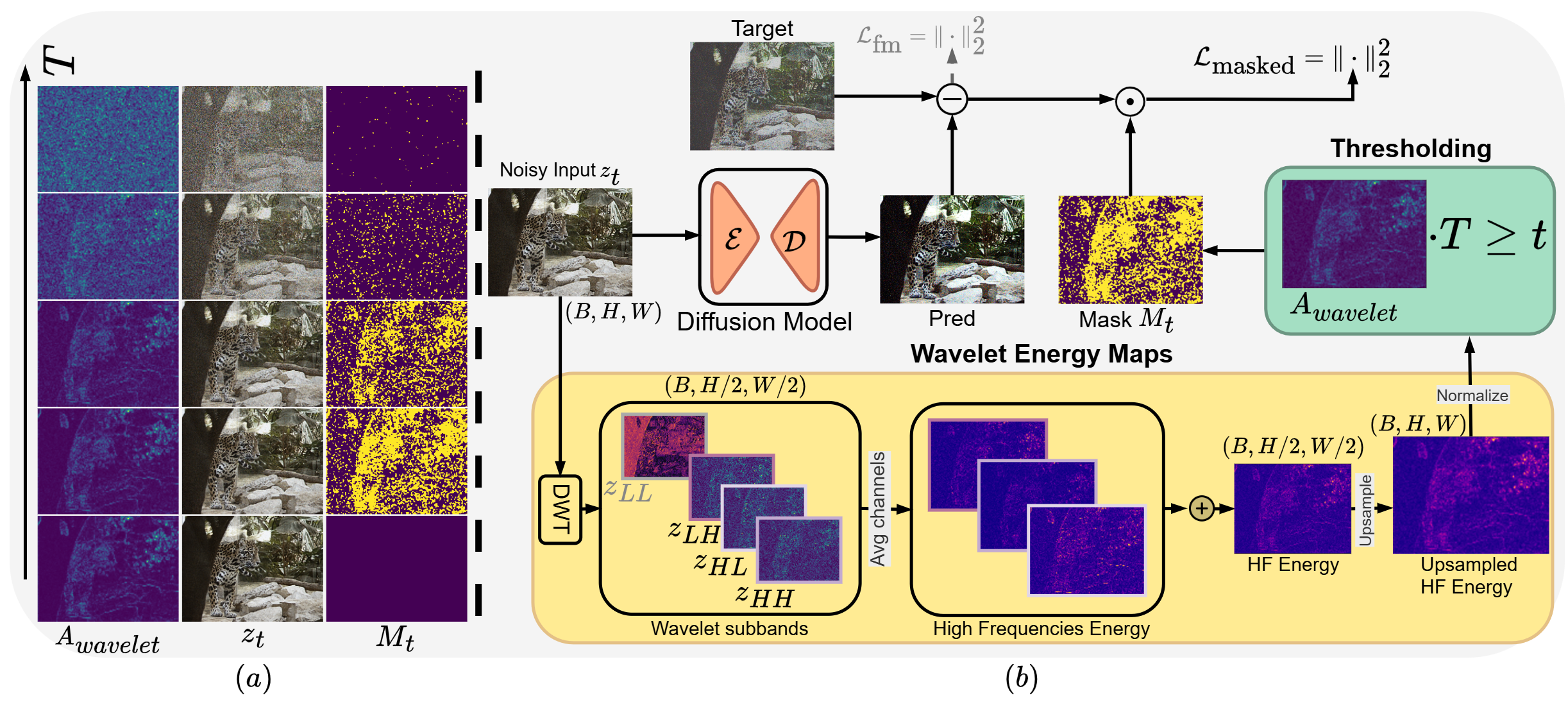

LWD is built on three tightly integrated stages that work together to solve the uniform-supervision bottleneck at ultra-high resolution.

Standard VAEs optimize for reconstruction, not spectral accuracy. The encoder/decoder introduces its own HF noise and aliasing, making the latent code "dirty." We cannot guide training with garbage saliency maps.

We fine-tune the VAE with a multi-resolution scale-consistency loss. The key insight: real-world structures are self-similar across scales, while compression artifacts are not. We enforce that encoding a downscaled image should be spectrally identical to downscaling the full-res encoding.

We apply a fast, non-trainable 1-level Discrete Wavelet Transform (Haar wavelet) to the noisy latent zt at each training step. This decomposes it into four subbands: LL (approximation), LH (horizontal HF), HL (vertical HF), HH (diagonal HF).

We aggregate the detail subbands into a spatial energy map — bright regions are structurally rich (hair, foliage), dark regions are simple (sky, flat walls).

High-frequency detail is the first thing destroyed by noise. At high timesteps (lots of noise), the saliency map is itself noisy — we must not rely on it for fine-grained masking. Our mask follows a curriculum:

High noise (high t): Mask less — force the model to learn global structure (LL band). Low noise (low t): Mask aggressively — focus only on the most salient HF regions. The threshold tightens as detail becomes recoverable.

LWD is model-agnostic: it improves perceptual quality (FID ↓, LPIPS ↓) and dramatically improves texture fidelity (GLCM ↑) on both diffusion and flow-matching architectures, across 2K and 4K resolutions.

| Model | FID ↓ | CLIPScore ↑ | Aesthetics ↑ | GLCM ↑ | Compression ↓ |

|---|---|---|---|---|---|

| SD3-F16 | 43.82 | 31.50 | 5.91 | 0.75 | 11.23 |

| SD3-Diff4k-F16 | 40.18 | 34.04 | 5.96 | 0.79 | 11.73 |

| LWD + SD3-F16 | 38.74 | 34.94 | 6.17 | 0.74 | 11.99 |

| PixArt-Sigma-XL | 39.13 | 35.02 | 6.43 | 0.79 | 13.66 |

| LWD + PixArt-Sigma-XL | 36.14 | 35.21 | 6.27 | 0.87 | 6.05 |

| Sana-1.6B | 32.06 | 35.28 | 6.15 | 0.93 | 24.01 |

| LWD + Sana-1.6B | 34.30 | 35.58 | 6.23 | 0.78 | 27.34 |

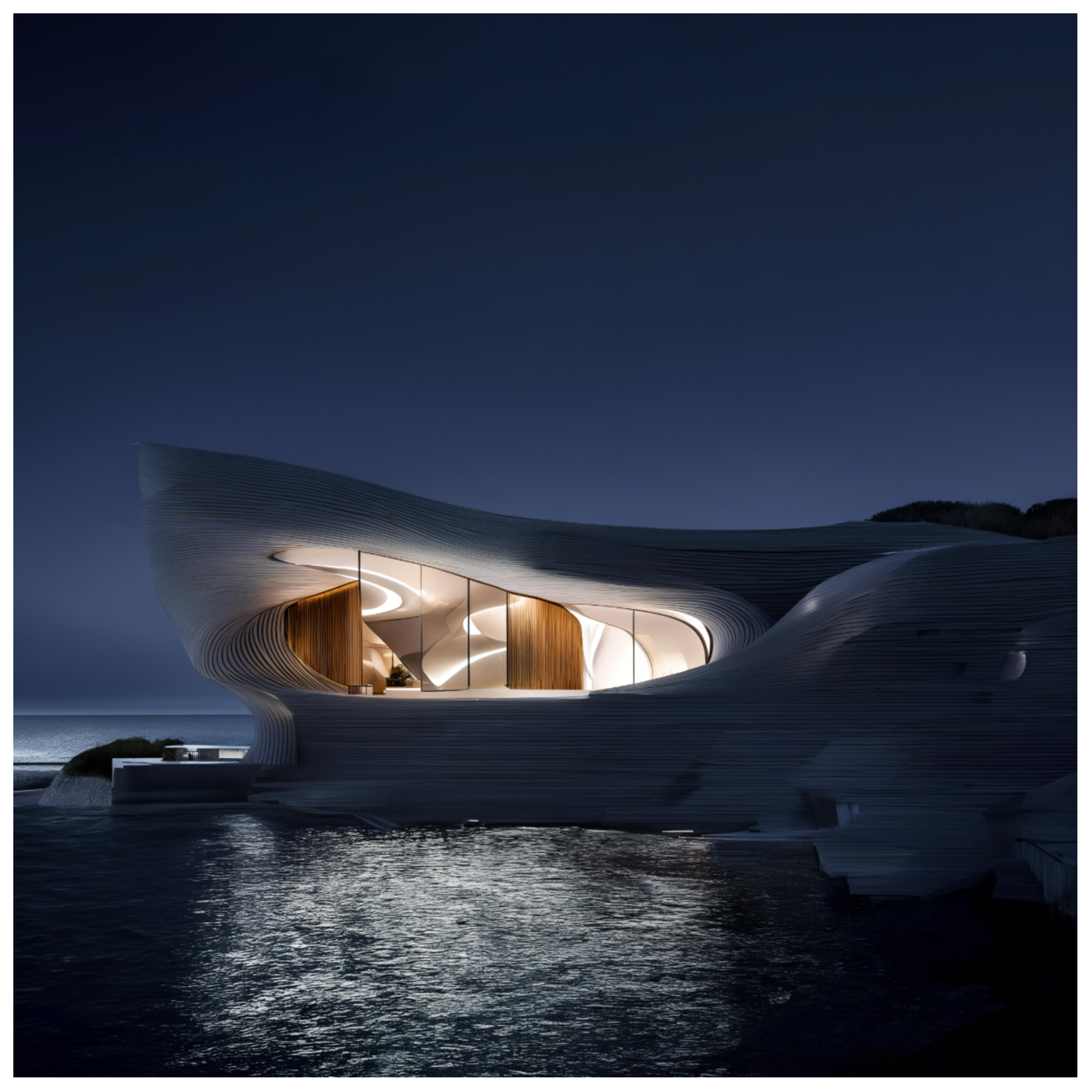

The improvements are most visible in detail-rich regions: hair, fabric, foliage, fine architecture. Baseline models exhibit spectral collapse — blurring and loss of sharp edges. LWD-enhanced models preserve the intricate structures.

Choose a model with the slider, then drag each image divider to compare baseline output against LWD-enhanced output.

If you find LWD useful for your research, please consider citing our paper.

@inproceedings{sigillo2026latent,

title={Latent Wavelet Diffusion For Ultra High-Resolution Image Synthesis},

author={Luigi Sigillo and Shengfeng He and Danilo Comminiello},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026},

url={https://openreview.net/forum?id=5og80LMVxG}

}